Unsupervised learning is a transformative AI concept that enables machines to learn from raw, unlabeled data without explicit guidance. Imagine it as entering a room full of strangers and discerning patterns based solely on their behavior or attire, devoid of any prior context. At the heart of unsupervised learning are two pivotal techniques: clustering and dimensionality reduction. Clustering involves grouping similar data points, while dimensionality reduction simplifies complex datasets, preserving essential information.

These techniques work in tandem to uncover patterns and structures within chaotic data, making unsupervised learning indispensable for tasks such as customer segmentation, image analysis, and anomaly detection. This drives meaningful insights across various fields.

Understanding Clustering: Deciphering Unlabeled Data

Clustering is a method for identifying natural groupings within data. It’s akin to sorting a drawer filled with assorted items—coins, keys, pens—without guidance on what belongs together. You might organize them by shape, color, or function. Machines perform this sorting at a scale and speed beyond human capability.

The most renowned clustering algorithm is K-Means, which partitions data into a set number of groups or clusters, with each point belonging to the nearest mean value. Despite its simplicity, K-Means is effective for tasks like customer segmentation, social network post categorization, and gene expression characterization.

However, K-Means has limitations. It requires prior knowledge of the number of clusters and assumes they are roughly spherical. When these conditions aren’t met, algorithms like DBSCAN or Hierarchical Clustering come into play. DBSCAN can identify clusters of any shape and eliminate noise, while Hierarchical Clustering creates a tree of clusters, useful when the number of groups is unknown.

Clustering is crucial for customer segmentation in marketing, anomaly detection in security, and pattern discovery in image processing. Its unsupervised nature proves that even without labeled examples, structure exists and can be detected.

Nevertheless, challenges persist, such as selecting the optimal number of clusters, evaluating their quality, and interpreting them meaningfully. While algorithms assist, human intuition often plays a significant role in understanding what clusters represent.

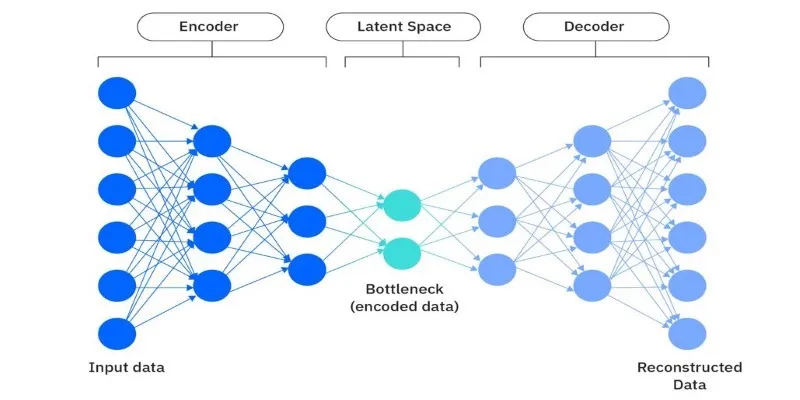

Dimensionality Reduction: Simplifying Complexity

Imagine trying to appreciate a painting through a microscope—too much detail obscures the big picture. This is the issue with high-dimensional data, where numerous features make it noisy and challenging to handle, known as the “curse of dimensionality.” Dimensionality reduction offers a solution.

The aim is to reduce features while preserving as much relevant information as possible. Principal Component Analysis (PCA) is a widely used technique that transforms original data into new variables, or principal components, capturing significant variance. It’s akin to compressing a 3D object into a 2D image without losing its shape.

PCA is invaluable for revealing hidden patterns and reducing complexity, enhancing tasks like clustering and visualization. However, PCA is linear, assuming data can be flattened along straight lines. For complex data, non- linear methods like t-SNE (t-distributed Stochastic Neighbor Embedding) or UMAP (Uniform Manifold Approximation and Projection) are preferable. These methods excel at maintaining local data structure, ideal for images, audio, or word embeddings.

A practical application of dimensionality reduction is facial recognition. A photo contains thousands of pixels, each a dimension. Reducing this to essential features enables real-time facial matching and recognition.

In genomics, where researchers manage datasets with thousands of genes per sample, dimensionality reduction highlights crucial elements. In finance, it simplifies extensive transaction history datasets, aiding in fraud detection or trend analysis.

The challenge lies in interpretation. Reducing dimensions may obscure the meaning of new features. Balancing accuracy and interpretability is an ongoing consideration.

Combining Clustering and Dimensionality Reduction

These techniques often complement each other. Clustering benefits from reduced noise, and dimensionality reduction leads to better insights. A common workflow involves preparing data for clustering using PCA or t-SNE, resulting in more stable and meaningful clusters.

In visual analytics, a high-dimensional dataset might first be compressed using UMAP, followed by grouping with K-Means, revealing clusters otherwise hidden in raw data.

This synergy is evident in recommendation systems. With thousands of users and films, each with unique features, dimensionality reduction highlights influential preferences. Clustering then identifies similar users or film categories, enabling sharper, more relevant recommendations.

In healthcare, AI analyzes complex data from patient histories, lab results, and genetics. Dimensionality reduction distills variables into essential patterns, while clustering reveals subgroups, potentially uncovering new disease types or risk factors.

Together, these tools form a dynamic duo in unsupervised learning, allowing machines to sort and simplify data even without context.

Conclusion

Unsupervised learning, through clustering and dimensionality reduction, provides powerful tools for uncovering hidden patterns in complex, unlabeled data. These techniques simplify overwhelming noise, helping machines find structure and meaning. While human interpretation remains crucial, these methods offer invaluable support across diverse fields, from healthcare to marketing. As AI evolves, unsupervised learning will continue to be a vital method for extracting insights from raw, unstructured data, driving innovation and discovery in unexplored ways.

zfn9

zfn9