Discretization in machine learning plays a crucial role in transforming continuous data into more manageable and analyzable forms. Many real-world datasets include numerical values that vary widely, making them too detailed for certain models or interpretations. Discretization addresses this by converting continuous values into distinct groups or intervals.

This comprehensive guide delves into the concept of discretization , explaining its definition, various types, real-world applications, and the advantages and disadvantages of the technique. It’s designed to be easily understood by both beginners and intermediate learners.

What Is Discretization in Machine Learning?

In data processing, discretization involves converting continuous numerical data into discrete groups or bins. For instance, discretization can transform numbers like 3.2, 7.5, or 10.8 into specific ranges such as “Low,” “Medium,” or “High” instead of using them as raw numbers.

Consider a dataset with ages ranging from 0 to 100. Instead of inputting raw ages into a model, these values can be categorized as follows:

- 0–18: Teen

- 19–35: Young Adult

- 36–60: Middle Aged

- 61–100: Senior

By converting continuous values into ranges, discretization simplifies data, enhancing the performance of machine learning models that work better with categorical inputs.

Why Is Discretization Important in Machine Learning?

Discretization is particularly valuable in modeling scenarios where raw continuous data may introduce noise or confusion. Models like Naive Bayes classifiers and decision trees often benefit from categorical inputs rather than continuous data.

Reasons why machine learning practitioners rely on discretization include:

- Improves model interpretability: Discrete categories are easier to comprehend.

- Simplifies data: Converts complex continuous data into manageable intervals.

- Reduces noise: Small variations in continuous data may not contribute to predictions.

- Helps specific algorithms: Some models struggle with continuous inputs.

- Handles outliers better: Discretization can lessen the impact of extreme values.

Types of Discretization Techniques

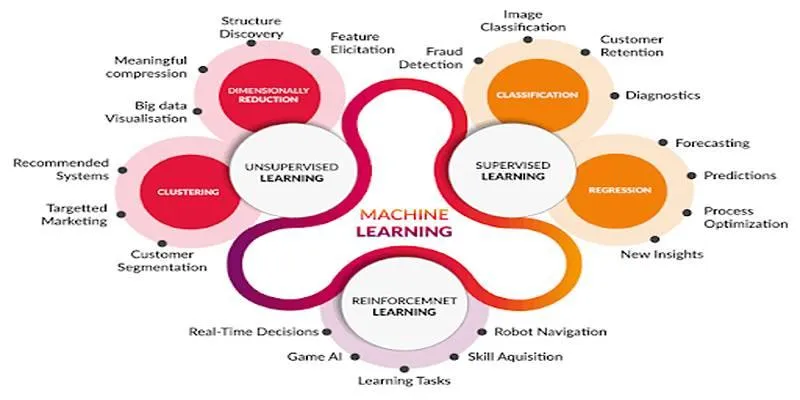

Discretization methods can be categorized into unsupervised and supervised approaches. The choice of method depends on the dataset, the model being used, and the analysis objective.

Unsupervised Discretization

Unsupervised techniques disregard the target variable, focusing solely on the distribution of the input feature. Two main unsupervised techniques are:

Equal Width Binning

In equal-width binning, the entire range of values is divided into bins of equal size. For example, a range of 0 to 100 divided into five bins would be:

- Bin 1: 0–20

- Bin 2: 21–40

- Bin 3: 41–60

- Bin 4: 61–80

- Bin 5: 81–100

Advantages: Easy to implement and simple to understand.

Disadvantages: Can lead to unequal data distribution and may create empty or overloaded bins.

Equal Frequency Binning

Also known as quantile binning, this technique ensures each bin contains roughly the same number of data points. For a dataset with 100 values and 4 bins, each bin would hold 25 values.

Advantages: Distributes data evenly across bins and is effective for skewed datasets.

Disadvantages: Unequal bin widths and similar values might be placed in separate bins.

Supervised Discretization

Supervised methods utilize the target variable to form bins, aiming to enhance the predictive power of the bins. A common method in this category is:

Decision Tree-Based Binning

This method employs a decision tree algorithm to split the continuous variable into categories, automatically finding the best cut points based on the target variable’s distribution.

Advantages: Produces bins optimized for the learning task and performs well in classification problems.

Disadvantages: May overfit the data and is more computationally expensive.

When Should Discretization Be Used?

Discretization is not always necessary but is highly beneficial in certain scenarios :

- Using categorical models such as Naive Bayes or logistic regression.

- When data contains outliers that need smoothing.

- If features are highly detailed, causing noise in the learning process.

- For improved interpretability, especially in business or healthcare applications.

- During visualization, where grouped data is easier to plot and analyze.

Pros and Cons of Discretization

While discretization offers many benefits, it also presents some limitations.

Pros:

- Makes data easier to interpret.

- Useful for specific machine learning models.

- Helps reduce overfitting in some cases.

- Assists with handling noisy or outlier-heavy data.

Cons:

- Loss of information: Fine-grained differences are removed.

- May introduce bias if bin thresholds are not well-chosen.

- Can hurt performance for models that prefer continuous input (like linear regression).

Best Practices for Discretization

Properly executed discretization can significantly enhance model clarity and usability. Here are some best practices:

- Use domain knowledge to define meaningful bin boundaries.

- Visualize data before binning to understand distributions.

- Avoid too many bins, which may lead to overfitting or complicate interpretation.

- Test different techniques and evaluate model performance to choose the best method.

- Ensure class balance in bins to avoid skewed datasets.

Tools and Libraries for Discretization

Most data science tools and libraries offer built-in functions for discretization, including:

- Pandas (Python):

pd.cut()for equal-width andpd.qcut()for equal-frequency binning. - Scikit-learn:

KBinsDiscretizerfor various binning strategies. - R programming:

cut()and other binning functions. - Weka: Provides supervised discretization as part of its preprocessing steps.

These tools automate and simplify the discretization process.

Conclusion

Discretization in machine learning is a powerful technique for transforming continuous data into understandable and usable categories. Whether through equal-width binning, frequency-based grouping, or advanced methods like decision tree splits, discretization helps models learn better, particularly when working with categorical algorithms or noisy datasets. It enhances interpretability, reduces noise, and supports various real-world applications from healthcare to finance. While not necessary for every model, knowing when and how to apply discretization is a valuable skill for any data scientist and machine learning engineer.

zfn9

zfn9