As artificial intelligence continues to evolve, its integration into the workplace has become increasingly common. ChatGPT, developed by OpenAI, is one such tool that professionals across industries are using to boost efficiency, automate repetitive tasks, and brainstorm ideas. However, the line between responsible use and misuse is not always clear.

While ChatGPT can certainly enhance productivity, employees must be cautious. Inappropriate or unethical usage can potentially breach company policies, violate confidentiality agreements, or compromise quality standards—each of which may have serious consequences, including termination.

This post explores 10 common workplace scenarios where using ChatGPT might put an employee’s job at risk, analyzing the gray areas between innovation and misconduct.

1. Submitting AI-Generated Content as Original Work

Employees in content creation roles—such as writers, marketers, or communication specialists—may be tempted to rely entirely on ChatGPT to produce written materials. While this can save time, submitting unedited, AI-generated work as original content can be a serious misstep.

Many companies view such actions as plagiarism or misrepresentation, particularly if the content is expected to reflect a human’s unique voice or creativity.

2. Relying on ChatGPT for Employee Performance Reviews

In management roles, conducting performance evaluations requires careful judgment, context, and personal insight. Using ChatGPT to write reviews—without personal input or oversight—can lead to generic, inaccurate assessments that fail to capture employee performance accurately.

If the misuse of AI in this context results in biased evaluations or HR disputes, the responsible manager may face consequences. Depending on the severity, it could damage employee relations or even result in termination for failing to fulfill managerial duties appropriately.

3. Sharing Confidential Company Information with ChatGPT

One of the most sensitive issues involving AI tools like ChatGPT is data privacy. If an employee inputs confidential business documents, client information, or proprietary data into ChatGPT, they may unintentionally breach non-disclosure agreements or corporate confidentiality policies.

Although AI providers typically anonymize and secure user data, companies are wary of third-party platforms handling sensitive information. In regulated industries or highly secure environments, this type of breach could justify immediate termination—and potentially legal action.

4. Using ChatGPT to Generate Business Reports or Financial Documents

ChatGPT can summarize and structure information efficiently, but when used to prepare reports involving financial projections, strategic insights, or analytical conclusions, the risk of inaccuracies rises. AI lacks contextual understanding and real-time data verification.

If an employee submits AI-generated reports containing factual or financial errors, it may undermine business decisions. In sectors like finance or consulting, such mistakes could erode trust and expose the company to risk. Repeated offenses may lead to dismissal due to incompetence or negligence.

5. Automating Internal or External Communication

Employees may use ChatGPT to automate internal emails, Slack messages, or customer communications. While efficiency is important, sending unreviewed, AI-generated messages can backfire if the tone is inappropriate, insensitive, or impersonal.

Clients and colleagues may interpret these messages as robotic or dismissive, damaging professional relationships. In customer-facing roles or communications departments, this could lead to reputational harm—and potentially cost the employee their position if complaints or errors escalate.

6. Composing Professional Emails Entirely Through AI

Writing clear, professional emails is a common workplace challenge. While ChatGPT can assist by generating drafts or outlining ideas, copying AI-generated text verbatim without editing or tailoring can reflect poorly on the sender.

If this approach becomes a pattern, especially in roles that demand personalized communication, it may be viewed as a lack of professionalism. Employers may interpret this as an unwillingness to engage thoughtfully, which could lead to performance evaluations or eventual termination.

7. Conducting Research Without Verifying AI Outputs

ChatGPT is often used for quick research, especially for general knowledge or topic overviews. However, relying solely on ChatGPT without cross-referencing reputable sources can lead to the spread of misinformation.

If an employee bases their work—such as presentations, reports, or product descriptions—on inaccurate information from AI, the impact can be substantial. Misrepresentation or factual errors in high-stakes environments may cost clients, damage credibility, and result in job loss due to unreliable work output.

8. Copying AI-Generated Code Without Reviewing for Copyright Issues

Software developers and programmers sometimes turn to ChatGPT for quick coding solutions or problem-solving assistance. While it’s acceptable to use AI for support, directly pasting code into production environments without understanding or modifying it may introduce security risks or licensing issues.

Since AI-generated code may replicate copyrighted structures or introduce vulnerabilities, companies often enforce strict guidelines. Developers who bypass these guidelines may face dismissal for code misuse or negligence—especially if the product fails or exposes the system to threats.

9. Using ChatGPT for Editing When Manual Review Is Expected

In editorial, academic, or publishing environments, editing often requires attention to style, tone, and context. Using ChatGPT to automatically polish content may seem efficient. Still, if the editor’s core responsibilities are reduced to AI-assisted shortcuts, it could be perceived as a failure to fulfill the role’s requirements.

Employers may view this as an ethical lapse, particularly when the position demands human nuance. If this pattern continues, the employee risks being replaced or terminated for outsourcing tasks that require skilled human oversight.

10. Misinterpreting or Misusing Financial and Strategic Data via ChatGPT

Analyzing charts, financial forecasts, or business performance metrics requires critical thinking and specialized knowledge. While ChatGPT can help explain basic concepts or formats, it cannot accurately interpret dynamic data or market trends.

If an employee attempts to use ChatGPT for financial interpretation without verification, the resulting decisions may be flawed. In roles tied to business strategy, accounting, or investment planning, such errors can carry serious consequences.

Conclusion

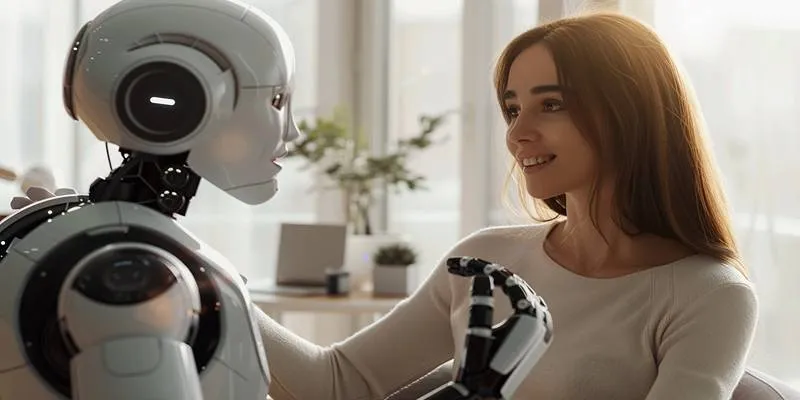

ChatGPT is a powerful and flexible tool that has opened new doors for efficiency and innovation in the workplace. However, its capabilities must be balanced with responsibility, professional standards, and organizational expectations. Misusing ChatGPT can blur ethical boundaries, compromise accuracy, and even violate legal or contractual obligations.

The core issue is not the use of AI itself but how and when it is used. Companies are increasingly developing internal guidelines to ensure AI use aligns with job roles and protects company interests.

zfn9

zfn9