The Kolmogorov-Arnold Network (KAN) is a pivotal concept in mathematics, rooted in function theory and approximation. It plays a crucial role in machine learning and computational frameworks. This article delves into its theoretical foundation, practical applications, and its ability to address high-dimensional problems, providing readers with a comprehensive understanding of its significance and potential.

Overview of the Kolmogorov-Arnold Network

The Kolmogorov-Arnold Network is a mathematical construct that merges functional analysis, dynamical systems, and approximation theory to portray complex high-dimensional functions. Originally proposed by the mathematician Andrey Kolmogorov in 1957 and later expanded by his student Vladimir Arnold, the KAN is based on the principle that any continuous function can be represented as a composition of simpler functions. This suggests that even intricate nonlinear functions can be expressed using simpler elements interconnected in a network-like fashion via weighted connections.

Historical Background

The Kolmogorov-Arnold representation theorem is a landmark in functional analysis and mathematical modeling. Andrey Kolmogorov’s groundbreaking hypothesis proposed that continuous multivariate functions could be represented as sums and compositions of univariate functions. This concept allowed complex functions to be broken down into manageable components, significantly aiding mathematical calculations and theoretical analysis.

Key Contributors

Building on Kolmogorov’s initial idea, Vladimir Arnold made substantial contributions by refining and formalizing the theorem. His work ensured the mathematical rigor of the representation process, defining precise conditions for decompositions. Arnold’s efforts demonstrated the theorem’s universality for continuous functions and showcased its applications in fields like differential equations, numerical analysis, and machine learning. Together, their work solidified the Kolmogorov-Arnold representation theorem as a cornerstone of modern mathematics.

Theoretical Framework

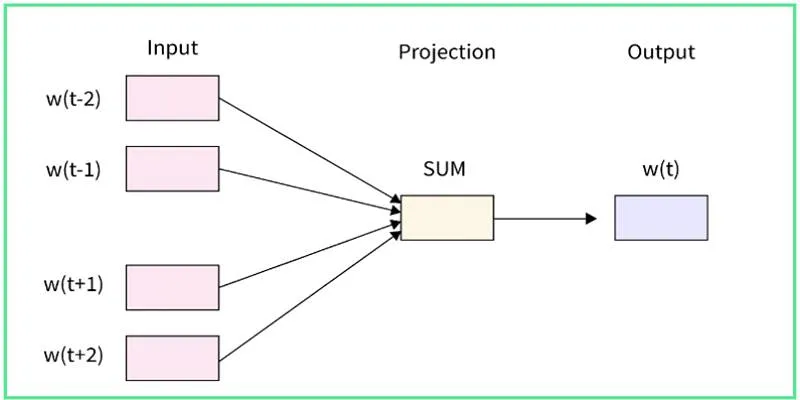

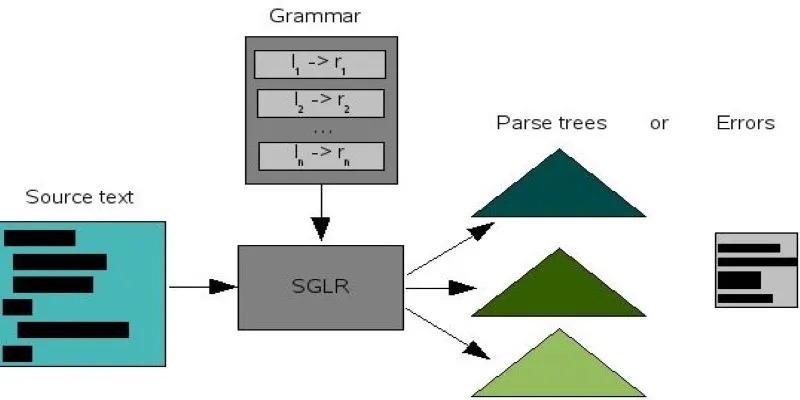

The Kolmogorov-Arnold theorem posits that any multivariate continuous function can be broken down into a finite sum of univariate functions, along with additional and auxiliary function compositions. Formally, for a continuous function \( f \colon [0,1]^n \to \mathbb{R} \), there exist continuous univariate functions \( \varphi_i \) and \( \psi_j \) such that:

\[f(x_1, x_2, \dots, x_n) = \sum_{i=1}^{2n+1} \varphi_i \left( \sum_{j=1}^n \psi_{ij}(x_j) \right).\]

This profound result, derived independently by Kolmogorov and further refined by Arnold, offers a framework to reduce complex, high-dimensional functions into sums of simpler, lower-dimensional ones. This decomposition is especially valuable for understanding and working with functions in high-dimensional spaces.

Mathematical Representation and Decomposition of Functions

The theorem’s decomposition strategy emphasizes two key components. First, the inner functions \( \psi_{ij}(x_j) \) transform individual input variables, capturing their contribution to the overall function. Second, the outer functions \( \varphi_i \) systematically combine these transformed values. This structured decomposition allows for the analysis and approximation of multivariate functions without exhaustive exploration of their entire domain.

By avoiding the curse of dimensionality, the Kolmogorov-Arnold theorem breaks down seemingly intractable problems into manageable parts, forming the basis for advancing numerical methods and computational techniques in function approximation.

High-Dimensional Function Approximation

Approximating functions in high-dimensional spaces is notoriously challenging, often requiring extensive data and computational resources. The Kolmogorov- Arnold theorem addresses these issues by showing that complex multivariate functions can be effectively expressed using sums of univariate functions. This insight has significant implications for fields like machine learning, where models frequently handle high-dimensional input data.

By employing this theorem, modern algorithms can optimize simpler, one- dimensional functions instead of directly tackling the full dimensionality of problems. Consequently, the Kolmogorov-Arnold theorem serves as both a theoretical breakthrough and a practical tool in high-dimensional analysis, driving progress in various scientific and engineering disciplines.

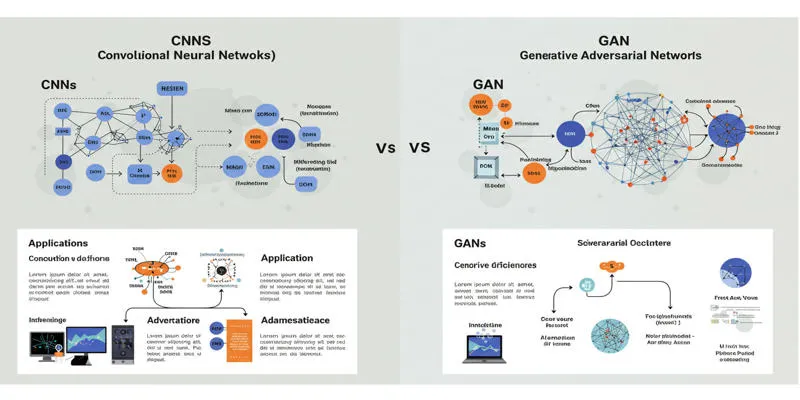

Structure of the Kolmogorov-Arnold Network

The Kolmogorov-Arnold network utilizes the theorem’s decomposition principle to simplify complex functions for computation. It dissects problems into manageable layers, facilitating efficient processing of high-dimensional data. This adaptable structure is beneficial across various applications.

Input Layer

The input layer connects raw high-dimensional data to the network, encoding it into a manageable form. This design is crucial for mapping high-dimensional inputs to simpler functions, optimizing tasks such as image processing and natural language processing by reducing complexity and handling intricate patterns with precision.

Hidden Layers

Hidden layers perform function decomposition, applying transformations based on the Kolmogorov-Arnold theorem to reduce dimensionality. Each layer refines data into simpler, one-dimensional forms, capturing data complexity and converting it into a usable format. This enables the network to excel in high- dimensional challenges in both theory and practice.

Output Layer

The output layer merges processed data from hidden layers into a final result, such as classifications or predictions. It ensures all transformations are cohesively combined, delivering accurate results tailored to specific tasks. The output layer is vital in solving high-dimensional problems in fields like AI, physics, and engineering, where precision is paramount.

Applications

Neural networks are essential tools in modern technology, offering extensive applications across industries to solve complex problems and drive innovation.

Artificial Intelligence and Machine Learning

Neural networks are central to advancements in AI and machine learning. From image recognition to natural language processing, they enable machines to learn, adapt, and make accurate predictions. These systems process large data volumes, identify patterns, and solve tasks with high precision. Their performance improves as researchers develop more advanced architectures and optimization methods.

Healthcare and Medical Diagnostics

The healthcare sector greatly benefits from neural networks, especially in diagnostics and predictive models. They analyze medical images like MRIs and CT scans for early disease detection, such as cancer, and predict patient outcomes using health data. These tools enhance diagnostic accuracy and aid medical professionals in providing timely, effective treatments.

Finance and Business

In finance and business, neural networks enhance decision-making and predictive analytics. They are employed for tasks like fraud detection, risk management, and algorithmic trading. By analyzing financial data and consumer behavior, they help businesses forecast trends, optimize strategies, and improve efficiency.

Conclusion

Neural networks have profoundly transformed diverse industries, from healthcare to finance, by enhancing efficiency, accuracy, and innovation. Their capacity to process extensive data and uncover patterns has revolutionized decision-making and problem-solving. However, as these technologies evolve, it is crucial to address ethical concerns, transparency, and potential biases to ensure responsible implementation.

zfn9

zfn9