Understanding BERT: The AI Revolution in Natural Language Processing

For decades, machines struggled to truly understand human language, often missing context and subtlety. This changed with the introduction of BERT—Bidirectional Encoder Representations from Transformers. Unlike previous models that processed individual words in isolation, BERT reads text as humans do, by considering the entire sentence’s context. Developed by Google, BERT has transformed everything from search engines to digital assistants, making AI more human-like and precise.

From generating better chatbot responses to enhancing medical text analysis, BERT is redefining how machines interpret language. In this article, we explore what BERT is and why it’s revolutionizing natural language processing (NLP).

What is the BERT Model?

The BERT model is an advanced machine learning model that enhances NLP by understanding text in a deeper, more contextual way. Unlike older models that read text from left to right (or right to left), BERT processes text bidirectionally, meaning it comprehends context from both directions of a word or phrase. This ability to consider surrounding words makes BERT superior at grasping the full meaning of a sentence.

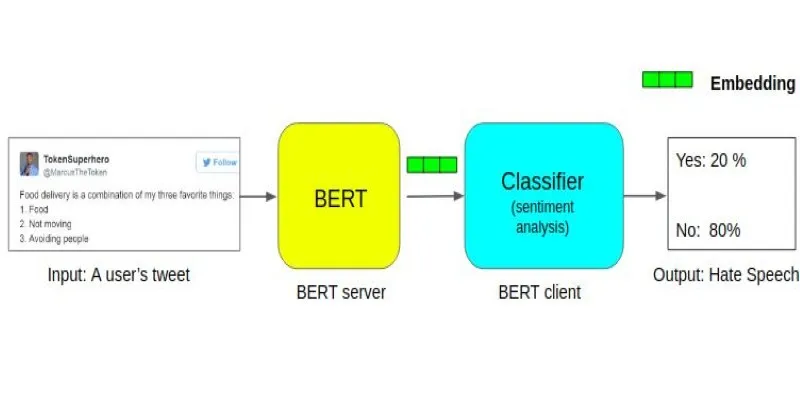

Developed by Google in 2018, BERT is based on a transformer model architecture, known for its capability to handle text with long-range dependencies. This architecture allows the model to process entire word sequences together rather than sequentially. BERT excels in various NLP tasks, including question-answering, language inference, and sentiment analysis.

How Does BERT Work?

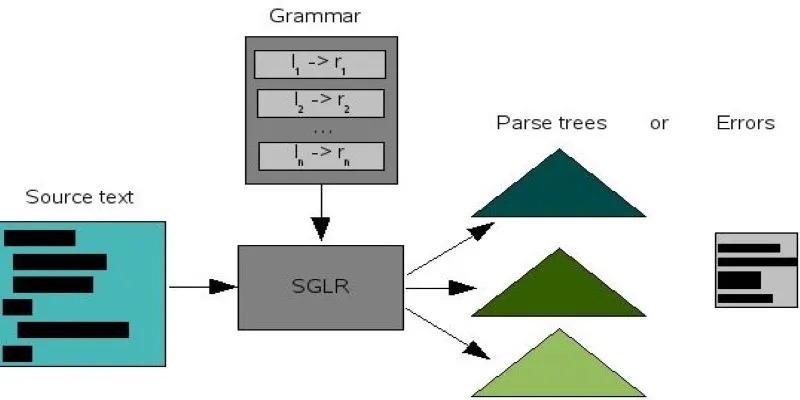

The BERT model essentially relies on two main components: tokenization and transformers. Tokenization involves breaking down text into smaller units called “tokens,” which can be single words, subwords, or punctuation. Once tokenized, the transformer architecture takes over.

The transformer is a deep learning model that excels at processing sequences of data, such as sentences or paragraphs. What distinguishes BERT is its use of bidirectional transformers. Traditional models read sentences sequentially, either from left to right or right to left, but BERT processes text in both directions simultaneously, capturing richer context and meaning.

For instance, consider the sentence: “The bank was closed.” A traditional model might struggle to determine if “bank” refers to a financial institution or a riverbank. However, BERT can accurately interpret the meaning by analyzing the surrounding words.

Another key feature of BERT is masked language modeling (MLM). In MLM, certain words in a sentence are randomly masked, and the model predicts the missing words based on the surrounding context. This task forces the model to learn word relationships and meanings, enhancing its language comprehension.

The Impact of BERT on Natural Language Processing

BERT has had a transformative impact on NLP. Before BERT, many models were limited by their inability to grasp the full context of a sentence, often making errors with ambiguous phrases or words with multiple meanings. With BERT, machines process language more like humans, considering the broader context rather than just individual words.

This has led to significant improvements in various NLP tasks. For example, BERT has greatly enhanced search engine performance. When you type a query into Google, BERT helps the search engine understand the meaning behind your words, delivering more relevant results. This is particularly crucial for complex queries where understanding context is essential.

BERT has also improved other NLP applications, such as sentiment analysis, translation, and text summarization. By enabling machines to understand language nuances, BERT has paved the way for more accurate, efficient, and human-like AI-driven systems.

Moreover, BERT’s open-source release has allowed researchers and developers to experiment with and build upon its architecture. This accessibility has sparked innovation in the AI community, with numerous advancements and applications emerging from BERT’s core principles.

Real-World Applications of BERT

BERT’s applications extend far beyond Google’s search engine. One of its most notable uses is in virtual assistants like Siri, Alexa, and Google Assistant. These AI systems rely heavily on NLP to understand and respond to user commands. By incorporating BERT, these assistants can process queries more accurately, considering context and providing more relevant responses.

BERT is also making strides in the healthcare industry. By understanding medical texts, BERT improves the interpretation of medical records, assists with clinical decision-making, and supports medical research. By analyzing vast amounts of text data, BERT identifies patterns and correlations that may otherwise go unnoticed, improving patient outcomes and streamlining healthcare processes.

Another area where BERT is impactful is customer support. Chatbots and automated support systems powered by BERT can better understand customer inquiries and provide faster, more accurate responses. This reduces the need for human intervention and enhances the overall customer experience.

Conclusion

The BERT model represents a monumental leap forward in NLP. By leveraging bidirectional transformers and masked language modeling, BERT allows machines to understand language with unprecedented depth and accuracy. Its applications span across industries, from search engines and virtual assistants to healthcare and customer service, revolutionizing how we interact with AI. As BERT continues to evolve and inspire innovations, its impact on AI and language processing will only grow. The future of language-based AI is incredibly exciting, with BERT at the forefront of this technological revolution.

zfn9

zfn9