The rise in concern about how Artificial Intelligence (AI) is being built, used, and regulated has been steady. For open-source developers, the introduction of the EU AI Act isn’t just background noise—it’s a direct call to examine how their projects align with a sweeping legal framework. The law doesn’t target only major corporations.

Anyone releasing or contributing to AI tools that may affect people in the EU could fall under its scope. Understanding the implications can be daunting, but open-source contributors need a clear picture of what this act means for their work and the choices they now face.

Decoding the EU AI Act for Open Source

The EU AI Act is the first broad legislative effort to regulate AI systems across a wide range of use cases. Its main goal is to ensure that AI tools used in the European Union are safe, transparent, and respect basic rights. This includes a layered risk-based approach—AI systems are sorted into different categories, from minimal to unacceptable risk. Open source projects, even when developed outside the EU, can be affected if their tools are deployed within EU member states.

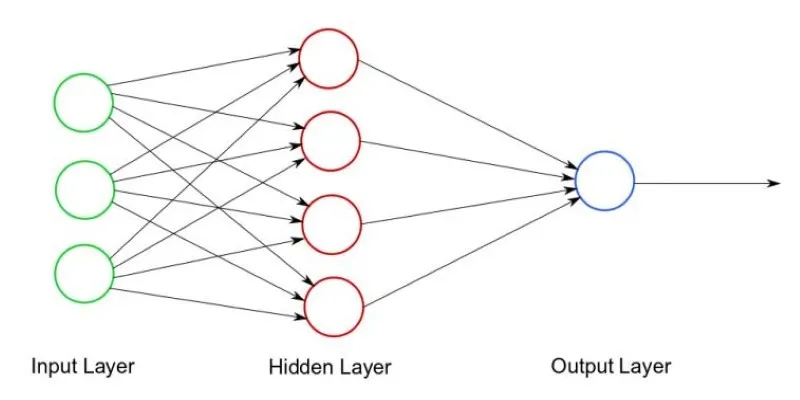

The act recognizes the complexity of AI ecosystems. It doesn’t explicitly ban open-source development, but it does draw a line when open-source tools are used in high-risk applications. Think facial recognition, medical diagnostics, employment screening, or credit scoring. If someone takes a publicly available AI model and applies it in these areas, the system might be subject to the act—even if the original developers didn’t build it with those uses in mind.

This puts open source developers in an odd position. You’re not always in control of how your code gets used. Yet the act’s language about providers, importers, and deployers can blur the lines around accountability. Depending on the level of influence a contributor or maintainer has over how the model functions or is distributed, they may find themselves drawn to compliance requirements.

Navigating Risk Levels and Responsibilities

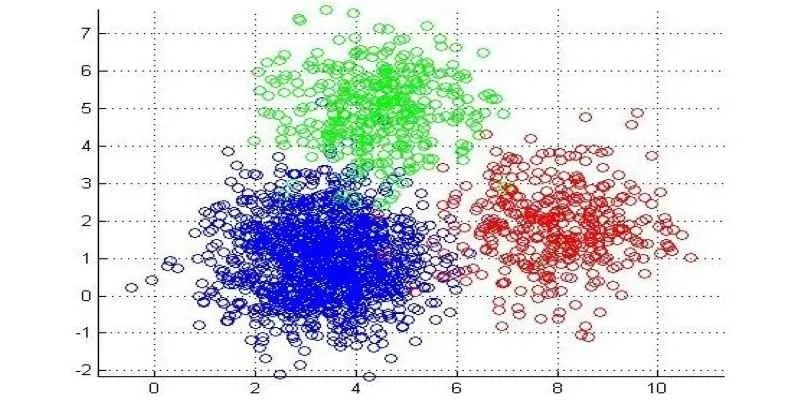

The EU AI Act divides AI systems into several risk tiers. Most open-source models used for research or general-purpose functions fall into the low or minimal-risk category. These don’t require any compliance beyond basic transparency. However, when an AI system is adapted for high-risk areas—such as biometric identification or recruitment—developers need to think more carefully.

Open-source models used in high-risk systems must meet strict standards around accuracy, data governance, logging, human oversight, and cybersecurity. While it’s the deployer—usually the person or company integrating the model—who must prove compliance, the original developers may be asked to provide documentation, testing methods, or version tracking.

This raises a concern. Many open source developers lack the resources or incentives to maintain compliance documentation or monitor how their code is being used. Still, if a model you maintain is being frequently adapted for high-risk applications, you might consider offering usage guidelines or restricting certain features.

A new class of AI models, called general-purpose AI systems (GPAI), also enters the conversation. These include large language models and multi-modal systems capable of being adapted for countless uses. Developers of GPAIs—whether open or proprietary—may face special transparency requirements, such as summaries of the data used during training and information about performance limitations.

Compliance Doesn’t Mean Corporate Overhead

One concern among the open-source community is that regulation will stifle innovation or drive contributors away from projects. But the EU AI Act includes some breathing room. It has carve-outs for non-commercial research and open-source code that isn’t distributed for profit. The key is how the code is used, not just how it’s shared.

For instance, if you build a language model and release it under an open license without monetization, you’re likely outside the act’s reach—unless your tool is repeatedly adapted for high-risk use. But if you offer paid support, integrate cloud APIs, or publish commercial add-ons, you might be classified as a provider, which brings obligations.

Another point is that the act encourages transparency and documentation, but it doesn’t demand complex bureaucratic procedures from individual contributors. If your project is aimed at education, experimentation, or public understanding of AI, compliance is generally low-touch.

Still, having a basic documentation process—version control, model cards, training data notes—helps both users and regulators understand the intent and limitations of your tool. It’s not just about legality; it builds trust in your work. And if your open-source model becomes popular, that trust becomes a foundation for its adoption.

Future-Proofing Your Work

Even though the EU AI Act is centered in Europe, its effects will reach beyond. If your open-source model is widely used, there’s a good chance it will cross borders. That makes it worth taking steps now to help your project stay adaptable. Start with clear licensing. Say if your model is for research only if it’s suitable for high-risk uses or if there are limitations. Keep a changelog and tag major versions so users know what changed. When possible, include training data sources or explain your data choices. This helps users and may prevent misuse.

There is also a shift toward shared governance in open-source AI. Instead of one person bearing all responsibility, some projects are introducing transparency boards, usage tracking, or community oversight. These aren’t legal rules, but they can keep projects aligned with public expectations and new laws.

The EU AI Act doesn’t exist to restrict open work. Its focus is on safety and accountability. For open source developers, the task is to stay agile without being caught off guard by changing rules.

Conclusion

The EU AI Act isn’t a roadblock for open source developers—it’s a reminder that AI now has real-world consequences. You don’t need to overhaul your project, but staying aware of how your tools might be used is worth the effort. A bit of clarity around purpose, documentation, and limitations can go a long way. Open collaboration still matters, but it now shares space with responsibility. Understanding the rules early can help you keep creating without getting caught off guard.

zfn9

zfn9