Artificial Intelligence (AI) has become an integral part of our daily lives, influencing everything from streaming recommendations to life-saving healthcare tools. However, as AI systems increasingly shape critical decisions, the demand for transparency and accountability grows. Understanding how AI operates and addressing potential biases is crucial for ensuring ethical use, building trust, and creating technology that serves humanity responsibly.

What is AI Transparency?

Artificial

Intelligence is already embedded in our daily activities, assisting with tasks

like driving automobiles, making product suggestions, and responding to web

inquiries. Yet, the mechanisms driving AI systems’ decisions often remain

opaque to users. AI transparency seeks to address this gap.

Artificial

Intelligence is already embedded in our daily activities, assisting with tasks

like driving automobiles, making product suggestions, and responding to web

inquiries. Yet, the mechanisms driving AI systems’ decisions often remain

opaque to users. AI transparency seeks to address this gap.

AI transparency involves organizations explaining how their systems function, detailing which data is utilized, and clarifying the reasons behind specific decisions. The goal is to make AI systems understandable to users beyond subject-matter experts. By maintaining clarity, organizations can foster trust and acceptance among users.

Why Do We Need AI Transparency?

In our modern society, complete visibility into AI is essential as it controls an expanding portion of our world’s operations. Transparency systems are necessary for users to comprehend specific decision processes, thereby enforcing accountability in critical domains like healthcare, hiring, and criminal justice. Without transparency, AI systems can perpetuate biases and errors, as their operating schemes remain unchallengeable.

Building Trust in Technology

Uncertainty often breeds apprehension. When a system makes decisions affecting your life, such as loan approvals or disease diagnoses, understanding the “why” and “how” is crucial. Transparent AI systems provide this understanding, fostering trust between users and technology.

Encouraging Fairness

AI systems can exhibit bias if trained on unfair or incomplete data. For instance, if an AI learns from past unfair hiring practices, it may continue to perpetuate that unfairness. Transparency allows for early detection of such problems. By sharing how AI models are trained and tested, developers can more easily identify and rectify biases.

Helping Regulation and Compliance

Many governments are now enacting laws demanding AI transparency, such as the European Union’s AI Act, which requires companies to explain their AI systems. Without transparency, companies risk legal challenges. Being open about AI systems helps companies stay ahead of regulations and avoid fines or lawsuits.

Key Elements of AI Transparency

To ensure AI transparency, several key elements must be prioritized. These elements lay the foundation for creating AI systems that are understandable, fair, and accountable. By addressing these components, organizations can build trust with users and stakeholders while enabling responsible AI development and deployment.

Data Transparency

Data is the cornerstone of any AI system. Data transparency involves sharing information about data sources, collection methods, and potential biases. It also includes informing users if sensitive data, such as personal information, is being used, helping them understand how data shapes AI decisions.

Algorithm Transparency

Algorithms are sets of instructions guiding AI systems. Algorithm transparency requires explaining the types of models used and how decisions are made. While it doesn’t mean revealing business secrets, it does involve providing enough information for users to grasp the basics.

Decision Transparency

Decision transparency focuses on the rationale behind AI actions. If an AI system approves your mortgage application or denies your health insurance, you should be able to ask, “Why?” and receive a clear answer. This promotes user respect and fairness.

Challenges in Achieving AI Transparency

Achieving AI transparency is a complex task involving both technical and ethical challenges. Balancing the need for clear explanations with proprietary protections and the intricacies of AI models makes this goal challenging.

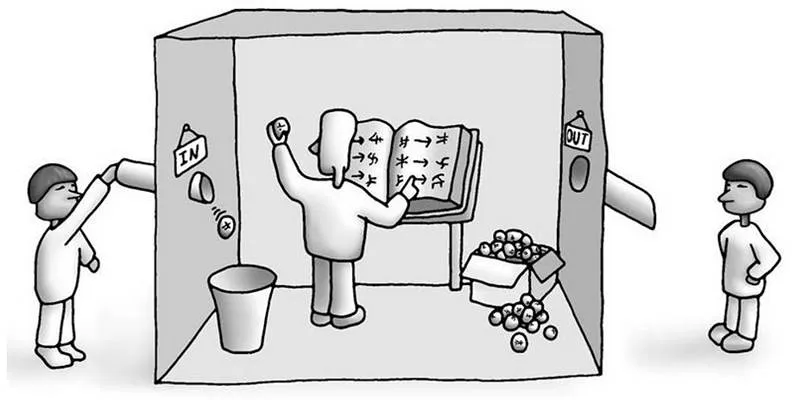

Complexity of AI Systems

Many AI models, especially deep learning models, are highly complex and involve millions of calculations that even experts find challenging to understand. Simplifying these models for users is a significant challenge, but it is essential to make complex models more understandable.

Balancing Transparency and Security

Excessive transparency can pose risks. Revealing too much about an AI system may enable bad actors to exploit it. Companies must find a balance between openness and system security.

Protecting Intellectual Property

Businesses invest significant resources in developing AI models and may fear that excessive transparency could reveal their secrets to competitors. Protecting intellectual property while providing sufficient information for user trust is crucial.

Best Practices for AI Transparency

Achieving AI transparency requires a thoughtful approach to ensure both trust and practicality. Implementing best practices helps organizations balance openness with security and privacy needs.

Using Explainable AI (XAI)

Explainable AI (XAI) refers to systems designed to provide human- understandable explanations for their decisions. XAI models offer reasons for their actions, helping users trust AI decisions and enabling developers to improve their systems.

Providing Clear Documentation

Clear and straightforward documentation is vital. Companies should create user guides and technical manuals that explain their AI systems. These documents should be accessible to both experts and everyday users.

Offering User-Friendly Explanations

Whenever possible, AI systems should directly explain their decisions to users. For example, a chatbot might say, “I recommended this product because you liked similar items before.” Simple explanations like these build trust and understanding.

Regular Auditing and Testing

AI systems should undergo regular testing to ensure they function as expected. Independent audits can identify biases, errors, and other issues. Sharing audit results with users or regulators demonstrates a company’s commitment to transparency.

The Future of AI Transparency

Transparency will play a pivotal role in shaping the future of AI, ensuring

these systems remain fair, ethical, and aligned with human values.

Transparency will play a pivotal role in shaping the future of AI, ensuring

these systems remain fair, ethical, and aligned with human values.

Growing Public Awareness

As AI becomes more prevalent, public curiosity about its workings increases. The demand for transparency will continue to grow, giving companies that embrace openness a competitive edge.

More Transparent AI Tools

New tools are being developed to explain AI models, making even the most complex systems understandable. As technology advances, achieving AI transparency will become more feasible.

Stronger Regulations

Governments worldwide are implementing laws requiring AI transparency. In the future, companies will face stricter standards. Proactively building transparent AI systems is a wise strategy to stay ahead of regulations.

Conclusion

AI transparency extends beyond technical aspects; it encompasses trust, fairness, and respect. When people understand how AI systems make decisions, they are more comfortable using them. Transparency aids in developing better AI, protects users’ rights, and ensures companies comply with legal standards. Companies, developers, and governments must collaborate to create AI systems that are open, fair, and comprehensible, ensuring that AI benefits everyone equitably and responsibly.

zfn9

zfn9